How I Cut AI Feature Costs by 61% with a Single, Optimized LLM Prompt

A Product Management case study on replacing complex agentic AI architectures with optimized prompt engineering to reduce costs and latency.

The Challenge

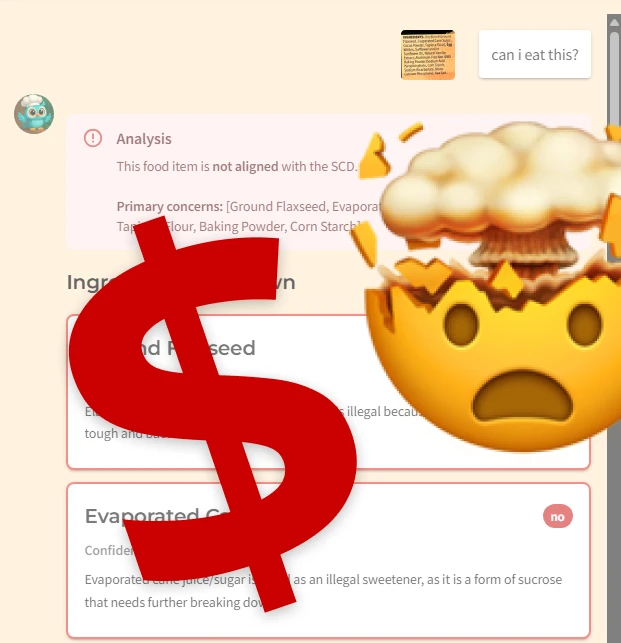

For users managing complex health conditions, reading ingredient labels isn’t just a chore—it’s a constant source of anxiety. In my app, Meadow Mentor, I set out to build an AI-powered feature to solve this.

The challenge? AI features, especially powerful ones, can be notoriously expensive to run. The popular ‘agentic’ approach, while powerful, felt overly complex and costly for this problem. My health SaaS app serves people who use therapeutic diets to help them manage health conditions. Users often struggle to identify unsafe ingredients in packaged food and are exhausted by the management of their condition.

I wanted to develop an ingredient label analysis feature that was fast, accurate, and simple to maintain. As a solo-founder, engineering simplicity wasn’t just a preference; it was a business necessity to ensure the feature delivered consistent results while being inexpensive.

My Roles

As the founder and product lead, I managed the entire lifecycle of this feature.

- Product Management: Discovering and defining the user problem, setting the success metrics (accuracy, cost, latency), and managing the development roadmap.

- UX Design: Prototyping the user experience and determining how the analysis would be presented for maximum clarity.

- AI Engineering & Evaluation: Designing and iteratively testing the prompt engineering to achieve my performance and cost targets.

The Process (Optimization Journey)

Discovery & Research

A key discovery happened when I prototyped my feature in Google’s AI Studio. During one of the AI outputs, it explained that it had cleaned up the ingredient list it parsed from the image I used. It removed marketing words like “Non-GMO” and “Natural.” It also split “and/or” ingredients as two separate ones to ensure safety for the user.

This revealed a key user need: they don’t just need ingredients analyzed; they need them parsed and cleaned for accuracy. I liked those steps so much I wrote them back into the System Prompt instructions. This ensured the AI parsed the ingredients the same way to create consistent results.

Strategy & Ideation

My core hypothesis was that a single, structured System Prompt workflow would perform better than a complex agentic architecture for this specific task.

To test this, I first established a baseline. I defined the baseline as the first setup that achieved 100% accuracy on my test set, which was Gemini 2.5 Flash with thinking mode and Google Search enabled. This gave me a target to beat: 3,595 tokens and a 21-second response time.

Design & Prototyping

With the technical strategy defined, I designed how the results would be presented to the user. Knowing my users are overwhelmed with information and worry, I needed the information architecture to be transparent and clear without overwhelming them.

I opted for a simple, clear scannable card layout that would categorize each ingredient as aligned with a yes or no, the AI’s transparent confidence score in its determination, and an educational reason for why the ingredient was aligned or not to the user’s diet. My design prioritized scannability and trust for an anxious user.

Prioritization & Execution

My priorities were clear:

- Achieve 100% accuracy.

- Find the lowest token usage cost.

- Find the fastest latency responses.

I executed a series of tests, systematically isolating variables like model choice, ‘thinking mode,’ and prompt engineering.

| Model & Configuration | Tokens | Latency | Accuracy | Notes |

|---|---|---|---|---|

| Gemini 2.5 Flash (Thinking, no Search) | 2,450 | 20s | Not 100% | Inaccurate, slow, expensive. |

| Baseline: Gemini 2.5 Flash (Thinking, Search) | 3,595 | 21s | 100% | Accurate, but slow and expensive. |

| Gemini 2.5 Flash (Non-Thinking, Search) | 1,930 | 16s | 100% | Better, but still room for improvement. |

| Final: Gemini 2.5 Flash Lite (Non-Thinking, Search, Prompt-Optimized) | 1,396 | 12s | 100% | Winner. Drastic cost & speed improvement. |

The Solution

My tests revealed that using the least intelligent model with Google Search turned on, and a highly specific system prompt to control its behavior gave me exactly what I wanted.

I’ve run this test several times and because of the non-deterministic nature of LLM outputs, I’ve seen the token usage average around 1,444 and response times around 11s give or take a second. Plus it’s correct 100% of the time. That is a significant improvement over Gemini 2.5 Flash non-thinking with Google Search on using 1,930 tokens in 16s.

My system prompt is outlined like this:

- The AI’s role and instruction not to output any text until the last step.

- Step 1: Extract ingredients list.

- Step 2: Parse and clean the list.

- Step 3: Do a web search on the cleaned list.

- Step 4: Build a nicely formatted table with the answers.

- Final output instructions, which restate those in the opening role and output paragraph.

The Impact

By iterating on the prompt and model selection, I achieved a final result that was:

- 61% Cheaper: Reduced token consumption from 3,595 to 1,396, a massive win for operational costs.

- 43% Faster: Cut user-facing latency from 21 seconds to 12 seconds, creating a much better user experience.

- 100% Accurate: Maintained perfect accuracy on the test case, proving that cost savings did not come at the expense of quality.

Lessons Learned

This project validated that for certain use cases, smart, structured prompt engineering can outperform complex, multi-agent architectures.

As a PM, understanding the fundamentals of how these models work (e.g., how tokens translate to cost) is a superpower for building commercially viable AI products.

Always establish a baseline. Without knowing the cost and speed of the first working version, I wouldn’t have been able to measure the massive impact of my optimizations.